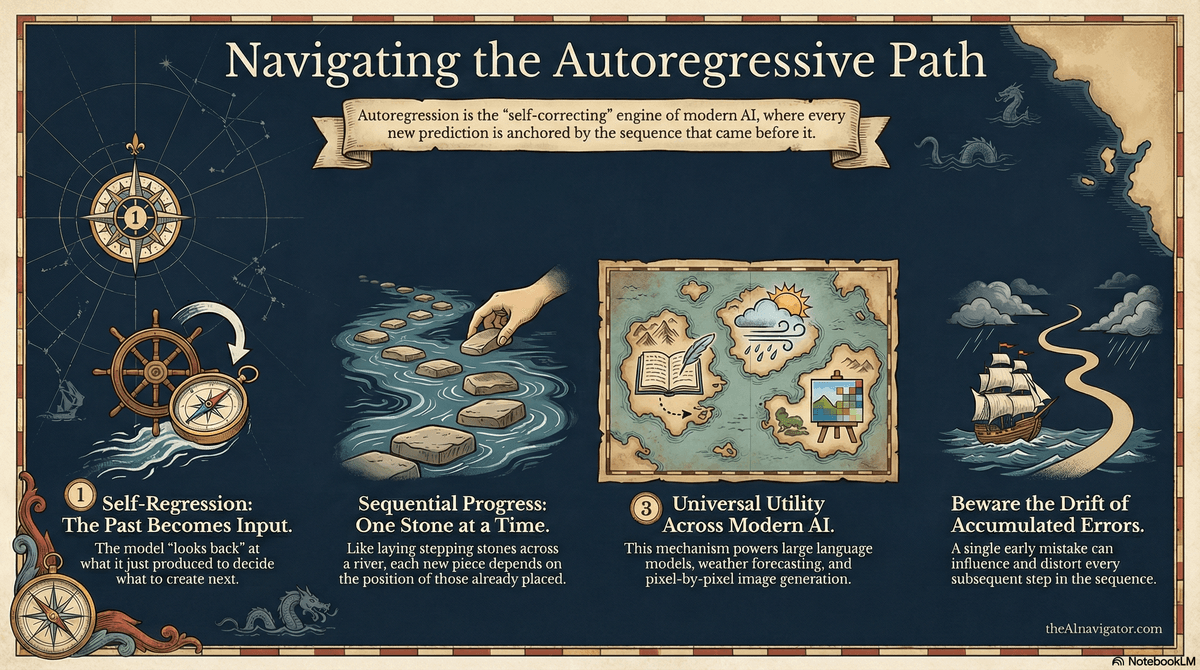

Autoregression in AI refers to a way of generating predictions where a model uses its own previous outputs as inputs for the next step. The word literally means “self-regression,” and that’s a helpful way to picture it: the model keeps looking back at what it just produced to decide what to produce next.

In many modern AI systems - especially large language models like GPT-4 - autoregression is the core mechanism behind text generation. Imagine writing a sentence one word at a time. The model starts with a prompt, predicts the most likely next word, then takes that new word into account to predict the following one, and so on. It’s like laying down stepping stones across a river: each new stone depends on the position of the ones already placed.

Autoregression is not limited to text. It’s also used in speech generation, time-series forecasting (like predicting stock prices or weather), and image generation models. For example, some image models generate pictures pixel by pixel or patch by patch, with each new part influenced by what has already been generated. You can visualize it as painting a canvas gradually, where each brushstroke depends on the colors and shapes already on the canvas.

This approach is powerful because it captures sequential patterns—grammar in language, rhythm in audio, or trends in data over time. However, it can also accumulate errors: if the model makes a small mistake early on, that mistake can influence everything that follows. Even so, autoregression remains one of the most important building blocks in modern generative AI systems.

If you’re exploring how autoregressive AI models like GPT generate text one token at a time, it’s equally important to understand how these systems impact strategy and decision-making at the leadership level. The AI for Executives course on Coursera breaks down how AI technologies work and how to apply them responsibly in business contexts. Enroll today to confidently translate technical AI concepts into actionable executive strategy*.